Configure AI Gateway on model serving endpoints

In this article, you learn how to configure Mosaic AI Gateway on a model serving endpoint.

Requirements

- A Databricks workspace in an external models supported region.

- Complete steps 1 and 2 of Create an external model serving endpoint.

Configure AI Gateway using the UI

This section shows how to configure AI Gateway during endpoint creation using the Serving UI.

If you prefer to do this programmatically, see the Notebook example.

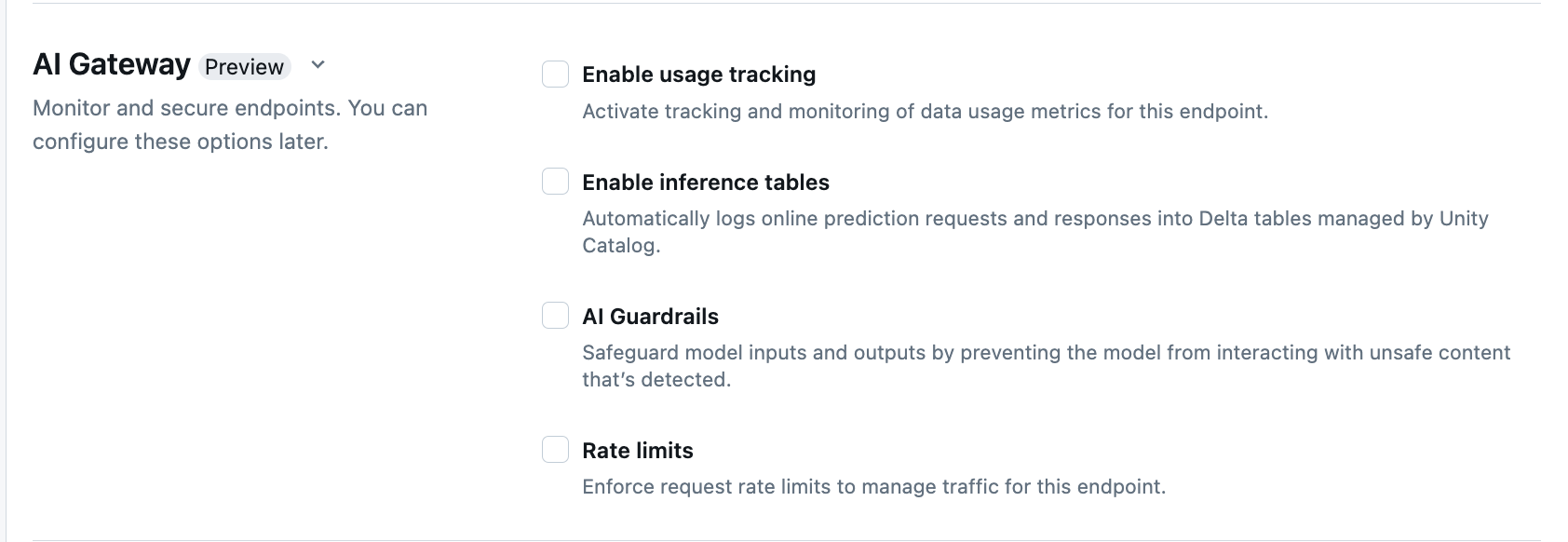

In the AI Gateway section of the endpoint creation page, you can individually configure the following AI Gateway features:

| Feature | How to enable | Details |

|---|---|---|

| Usage tracking | Select Enable usage tracking to enable tracking and monitoring of data usage metrics. | - You must have Unity Catalog enabled. - Account admins must enable the serving system table schema before using the system tables: system.serving.endpoint_usage which captures token counts for each request to the endpoint and system.serving.served_entities which stores metadata for each external model.- See Usage tracking table schemas - Only account admins have permission to view or query the served_entities table or endpoint_usage table, even though the user that manages the endpoint must enable usage tracking. See Grant access to system tables- The input and output token count are estimated as ( text_length+1)/4 if the token count is not returned by the model. |

| Payload logging | Select Enable inference tables to automatically log requests and responses from your endpoint into Delta tables managed by Unity Catalog. | - You must have Unity Catalog enabled and CREATE_TABLE access in the specified catalog schema.- Inference tables enabled by AI Gateway have a different schema than inference tables created for model serving endpoints that serve custom models. See AI Gateway-enabled inference table schema. - Payload logging data populates these tables less than hour after querying the endpoint. - Payloads larger than 1 MB are not logged. - The response payload aggregates the response of all of the returned chunks. - Streaming is supported. In streaming scenarios the response payload aggregates the response of returned chunks. |

| AI Guardrails | See Configure AI Guardrails in the UI. | - Guardrails prevent the model from interacting with unsafe and harmful content that is detected in model inputs and outputs. - Output guardrails are not supported for embeddings models or for streaming. |

| Rate limits | You can enforce request rate limits to manage traffic for your endpoint on a per user and per endpoint basis | - Rate limits are defined in queries per minute (QPM). - The default is No limit for both per user and per endpoint. |

| Traffic routing | To configure traffic routing on your endpoint, see Serve multiple external models to an endpoint. |

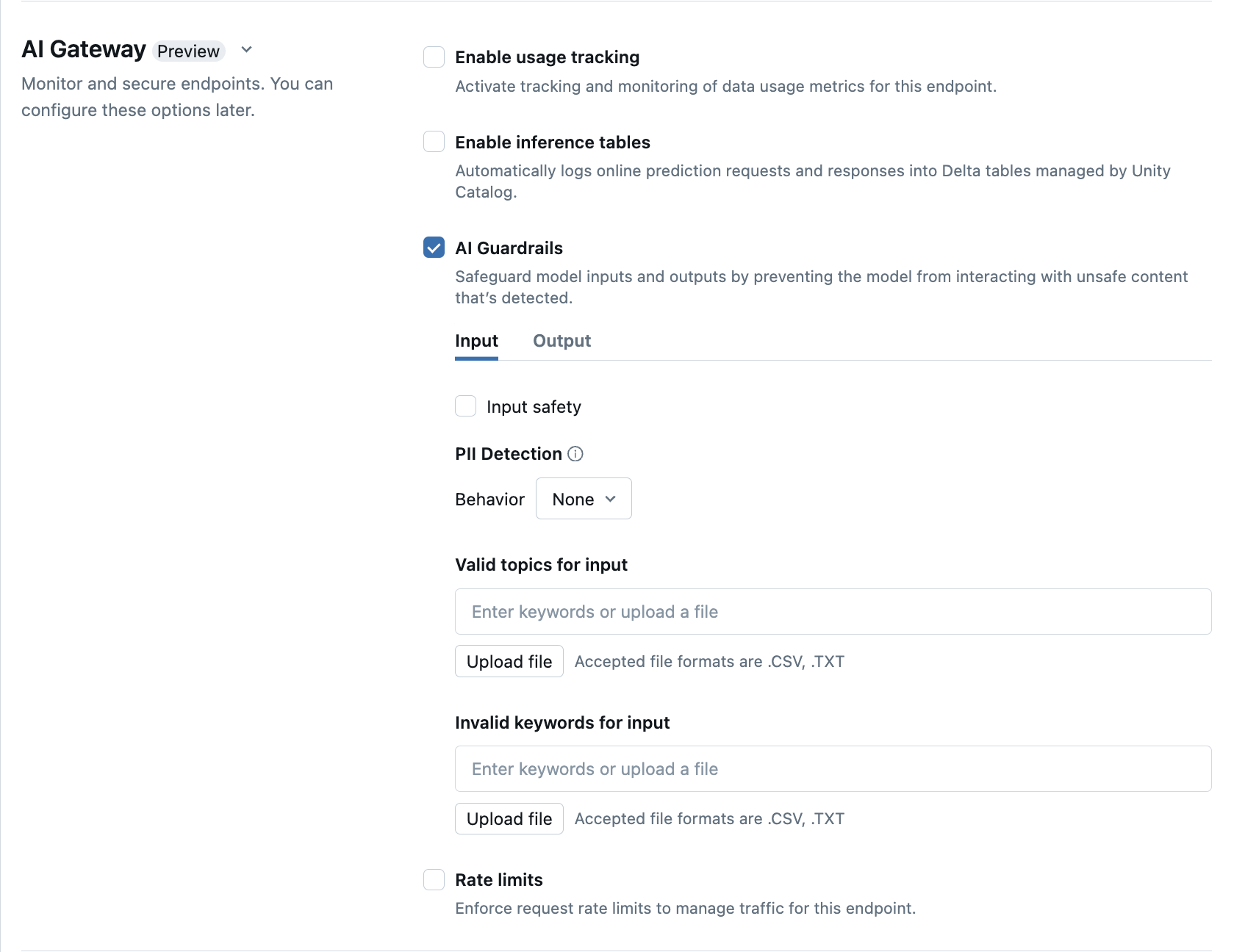

Configure AI Guardrails in the UI

The following table shows how to configure supported guardrails.

| Guardrail | How to enable | Details |

|---|---|---|

| Safety | Select Safety to enable safeguards to prevent your model from interacting with unsafe and harmful content. | |

| Personally identifiable information (PII) detection | Select PII detection to detect PII data such as names, addresses, credit card numbers. | |

| Valid topics | You can type topics directly into this field. If you have multiple entries, be sure to press enter after each topic. Alternatively, you can upload a .csv or .txt file. |

A maximum of 50 valid topics can be specified. Each topic cannot exceed 100 characters |

| Invalid keywords | You can type topics directly into this field. If you have multiple entries, be sure to press enter after each topic. Alternatively, you can upload a .csv or .txt file. |

A maximum of 50 invalid keywords can be specified. Each keyword cannot exceed 100 characters. |

Usage tracking table schemas

The system.serving.served_entities usage tracking system table has the following schema:

| Column name | Description | Type |

|---|---|---|

served_entity_id |

The unique ID of the served entity. | STRING |

account_id |

The customer account ID for Delta Sharing. | STRING |

workspace_id |

The customer workspace ID of the serving endpoint. | STRING |

created_by |

The ID of the creator. | STRING |

endpoint_name |

The name of the serving endpoint. | STRING |

endpoint_id |

The unique ID of the serving endpoint. | STRING |

served_entity_name |

The name of the served entity. | STRING |

entity_type |

Type of the entity that is served. Can be FEATURE_SPEC, EXTERNAL_MODEL, FOUNDATION_MODEL, or CUSTOM_MODEL |

STRING |

entity_name |

The underlying name of the entity. Different from the served_entity_name which is a user provided name. For example, entity_name is the name of the Unity Catalog model. |

STRING |

entity_version |

The version of the served entity. | STRING |

endpoint_config_version |

The version of the endpoint configuration. | INT |

task |

The task type. Can be llm/v1/chat, llm/v1/completions, or llm/v1/embeddings. |

STRING |

external_model_config |

Configurations for external models. For example, {Provider: OpenAI} |

STRUCT |

foundation_model_config |

Configurations for foundation models. For example,{min_provisioned_throughput: 2200, max_provisioned_throughput: 4400} |

STRUCT |

custom_model_config |

Configurations for custom models. For example,{ min_concurrency: 0, max_concurrency: 4, compute_type: CPU } |

STRUCT |

feature_spec_config |

Configurations for feature specifications. For example, { min_concurrency: 0, max_concurrency: 4, compute_type: CPU } |

STRUCT |

change_time |

Timestamp of change for the served entity. | TIMESTAMP |

endpoint_delete_time |

Timestamp of entity deletion. The endpoint is the container for the served entity. After the endpoint is deleted, the served entity is also deleted. | TIMESTAMP |

The system.serving.endpoint_usage usage tracking system table has the following schema:

| Column name | Description | Type |

|---|---|---|

account_id |

The customer account ID. | STRING |

workspace_id |

The customer workspace id of the serving endpoint. | STRING |

client_request_id |

The user provided request identifier that can be specified in the model serving request body. | STRING |

databricks_request_id |

A Azure Databricks generated request identifier attached to all model serving requests. | STRING |

requester |

The ID of the user or service principal whose permissions are used for the invocation request of the serving endpoint. | STRING |

status_code |

The HTTP status code that was returned from the model. | INTEGER |

request_time |

The timestamp at which the request is received. | TIMESTAMP |

input_token_count |

The token count of the input. | LONG |

output_token_count |

The token count of the output. | LONG |

input_character_count |

The character count of the input string or prompt. | LONG |

output_character_count |

The character count of the output string of the response. | LONG |

usage_context |

The user provided map containing identifiers of the end user or the customer application that makes the call to the endpoint. See Further define usage with usage_context. | MAP |

request_streaming |

Whether the request is in stream mode. | BOOLEAN |

served_entity_id |

The unique ID used to join with the system.serving.served_entities dimension table to lookup information about the endpoint and served entity. |

STRING |

Further define usage with usage_context

When you query an external model with usage tracking enabled, you can provide the usage_context parameter with type Map[String, String]. The usage context mapping appears in the usage tracking table in the usage_context column. The usage_context map size cannot exceed 10 KiB.

Account admins can aggregate different rows based on the usage context to get insights and can join this information with the information in the payload logging table. For example, you can add end_user_to_charge to the usage_context for tracking cost attribution for end users.

{

"messages": [

{

"role": "user",

"content": "What is Databricks?"

}

],

"max_tokens": 128,

"usage_context":

{

"use_case": "external",

"project": "project1",

"priority": "high",

"end_user_to_charge": "abcde12345",

"a_b_test_group": "group_a"

}

}

AI Gateway-enabled inference table schema

Inference tables enabled using AI Gateway have the following schema:

| Column name | Description | Type |

|---|---|---|

request_date |

The UTC date on which the model serving request was received. | DATE |

databricks_request_id |

A Azure Databricks generated request identifier attached to all model serving requests. | STRING |

client_request_id |

An optional client generated request identifier that can be specified in the model serving request body. | STRING |

request_time |

The timestamp at which the request is received. | TIMESTAMP |

status_code |

The HTTP status code that was returned from the model. | INT |

sampling_fraction |

The sampling fraction used in the event that the request was down-sampled. This value is between 0 and 1, where 1 represents that 100% of incoming requests were included. | DOUBLE |

execution_duration_ms |

The time in milliseconds for which the model performed inference. This does not include overhead network latencies and only represents the time it took for the model to generate predictions. | BIGINT |

request |

The raw request JSON body that was sent to the model serving endpoint. | STRING |

response |

The raw response JSON body that was returned by the model serving endpoint. | STRING |

served_entity_id |

The unique ID of the served entity. | STRING |

logging_error_codes |

ARRAY | |

requester |

The ID of the user or service principal whose permissions are used for the invocation request of the serving endpoint. | STRING |

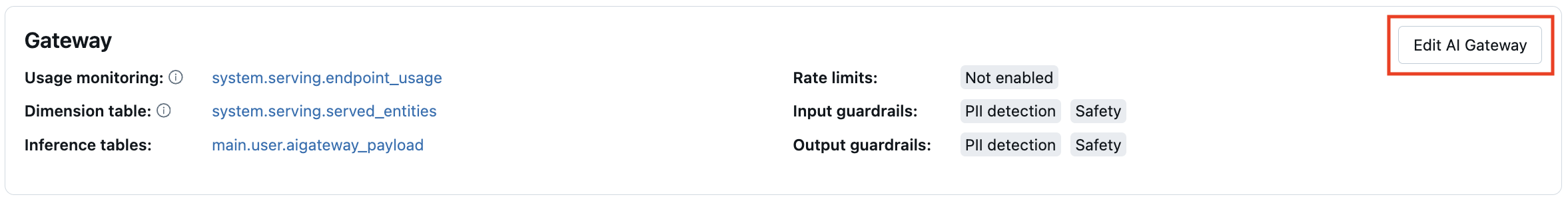

Update AI Gateway features on endpoints

You can update AI Gateway features on model serving endpoints that had them previously enabled and endpoints that did not. Updates to AI Gateway configurations take about 20-40 seconds to be applied, however rate limiting updates can take up to 60 seconds.

The following shows how to update AI Gateway features on a model serving endpoint using the Serving UI.

In the Gateway section of the endpoint page, you can see which features are enabled. To update these features, click Edit AI Gateway.

Notebook example

The following notebook shows how to programmatically enable and use Databricks Mosaic AI Gateway features to manage and govern models from providers. See the following for REST API details: