Use Healthcare data foundations in healthcare data solutions (preview)

[This article is prerelease documentation and is subject to change.]

The Healthcare data foundations capability enhances Fast Healthcare Interoperability Resources (FHIR) data processing within the data lake environment. It efficiently structures data for analytics and AI/machine learning modeling. These data pipelines flatten or transform the ingested FHIR JSON data into a tabular structure that you can access using traditional SQL tools. It enables exploratory analysis on various aspects of healthcare data, including clinical, financial (claims and extended of benefits), and administrative data modules.

The foundation of healthcare data solutions (preview) is based on the medallion lakehouse architecture. In this architecture, data is logically organized and processed using a multi-layered approach. The goal is to improve the structure and quality of data as it flows through each layer. The first layer is bronze which maintains the raw state of the data source. The second layer is silver which represents a validated, enriched version of the data. The third, and final, layer is gold which is highly refined and aggregated. After flattening the data, you can use traditional SQL tools to explore the clinical data. Healthcare data foundations also support the following functionalities:

- Microsoft Fabric notebooks that support seamless interaction with lakehouse data using popular PySpark and Python library for data exploration and processing.

- SQL endpoints with T-SQL to query the tabular data and conduct adhoc or exploratory analysis.

- Power BI to visualize data stored in OneLake. You can create dashboards, reports, charts, and graphs to explore and present the data in a meaningful way.

To learn more about the capability and understand how to deploy and configure it, see:

The capability also includes the global configuration notebook healthcare#_msft_config_notebook that helps you set up and manage the configuration necessary for all the data transformations in healthcare data solutions (preview).

Important

Don't run this notebook directly, as other notebooks execute it during setup.

You need the Healthcare data foundations capability to run other healthcare data solutions (preview) capabilities. Hence, make sure that you deploy this capability successfully before you deploy other capabilities. To run the Healthcare data foundations pipelines, you must set up and execute the FHIR data ingestion pipeline first for ingesting the FHIR data or sample data. For more information, see:

Prerequisites

Before you run the FHIR export service notebook, complete the following steps:

- If you're not using a FHIR server, set up and connect the sample data to the Fabric workspace as explained in Deploy sample data.

- Deploy and configure Healthcare data foundations.

- Deploy, configure, and execute the FHIR data ingestion capability.

- Configure the healthcare#_msft_config_notebook as explained in Configure the global configuration notebook.

- Set up supplementary configuration for the other notebooks, based on your requirement. For detailed guidance, see Notebook configuration.

Bronze ingestion

This section explains how to use the healthcare#_msft_raw_bronze_ingestion notebook deployed as part of the Healthcare data foundations capability. The notebook invokes the BronzeIngestionService module in the healthcare data solutions (preview) library to ingest FHIR data from the NDJSON source files into the corresponding table in the bronze lakehouse. For more information about this notebook, see healthcare#_msft_raw_bronze_ingestion.

You can run the notebook on demand or on a preferred schedule as part of a data pipeline in Microsoft Fabric. For more information, see Data pipeline runs in Fabric.

After you run the notebook, you can verify whether the records are ingested successfully in the corresponding bronze lakehouse table. Run a SELECT query on the bronze lakehouse table, as shown in the following example with the Patient table:

Note

By default, the notebook is configured to utilize the sample data provided with healthcare data solutions (preview). If you want to use your own data and not the sample data, update the source_path_pattern variable in the notebook to point to the location of your data.

Silver flattening

This section explains how to use the healthcare#_msft_bronze_silver_flatten notebook deployed as part of the Healthcare data foundations capability. The notebook invokes the SilverIngestionService module in the healthcare data solutions (preview) library to flatten the FHIR resources from the bronze lakehouse tables and ingest the resulting data into the corresponding silver lakehouse tables. By default, you aren't expected to make any changes to this notebook. If you prefer pointing to different source and target lakehouses, you can change the values in the healthcare#_msft_config_notebook.

We recommend that you schedule this notebook job to run every 4 hours. The initial run might not have data to consume because of concurrent and dependent jobs, which can cause latency. You can reduce this latency by adjusting the frequency of higher layer jobs.

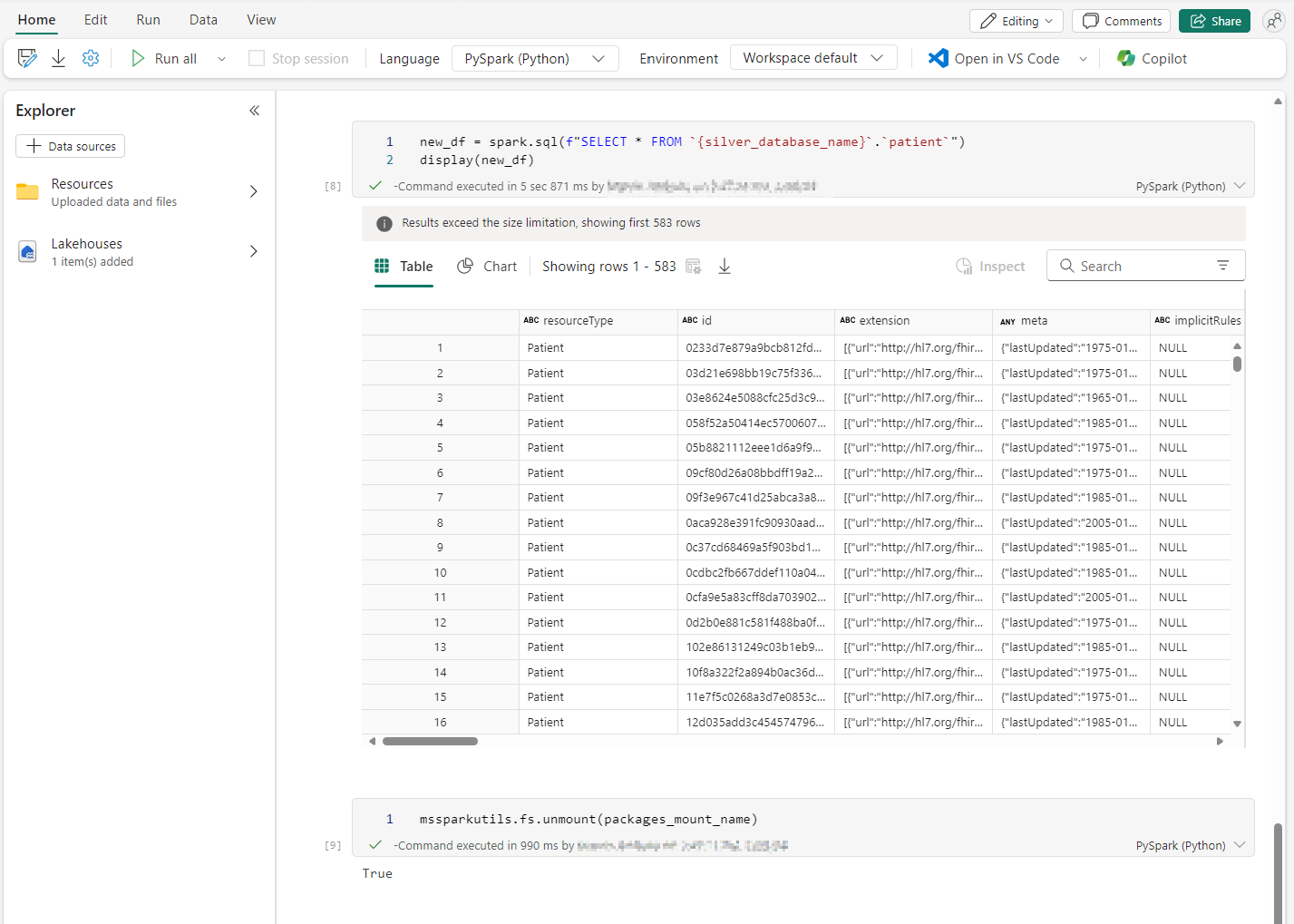

After you run the notebook, you can verify whether the records are successfully ingested in the corresponding silver lakehouse table. Run a SELECT query on the silver lakehouse table, as displayed in the following example with the Patient table:

Guidelines for silver flattening

The following rules process the flattening of each FHIR domain resource:

Primitive FHIR elements, such as string, integer, and boolean, are flattened and encoded into native SQL types (in the delta or parquet storage format). Few examples of such FHIR elements are

genderandbirthDatein the Patient resource.Complex and multi-value FHIR elements are persisted as structs and arrays (in the parquet format). Some examples of such FHIR elements are

identifier,name, andtelecomin the Patient resource.Currently, the SQL analytics endpoint doesn't surface complex types (such as structs and arrays). Each complex column is converted to a string representation of the complex dataset and labeled with a

_stringsuffix. You can then query it from the SQL analytics endpoint. Some examples of such FHIR elements arename_string,telecom_string, andidentifier_stringin the Patient resource.

Normalization

Normalization is a process of reducing data redundancy in a table and improving data integrity. The system performs it as an extra step after flattening the data and during transforming data from bronze layer to silver layer. Common data types such as datetime fields and reference fields are normalized based on the following HL7 SQL on FHIR guidelines:

A single reference and resource ID is normalized using the following rules:

All resource IDs are normalized by applying the SHA-256 hash function to the following specific format:

sourceSystemURL/resourceType/resourceId. This process ensures that each resource has a consistent and unique identifier across the system.The original ID is then preserved as

id_orig.

This approach of normalizing a single resource object is recursively used to normalize an array of reference objects and nested references.

FHIR

dateelements are converted to UTC and their original value is persisted in an_origcolumn.