Generics based Framework for .Net Hadoop MapReduce Job Submission

Over the past month I have been working on a framework to allow composition and submission of MapReduce jobs using .Net. I have put together two previous blog posts on this, so rather than put together a third on the latest change I thought I would create a final composite post. To understand why lets run through a quick version history of the code:

- Initial release where the values are treated as strings, and serialization was handled through Object.ToString()

- Made minor modifications to the submission APIs

- Modified the Reducer and Combiner types to allow In-Reducer optimizations through the ability to yield a Tuple of the key and value in the Cleanup

- Modified the Combiner and Reducer base classes such that data out of the mapper, in and out of the combiner, and in to the reducer uses a binary formatter; thus changing the base classes from strings to objects; meaning the classes can now cast to the expected type rather than performing string parsing

- Added support for multiple mapper keys; with supporting utilities

The latest change takes advantage of the fact the objects are serialized in Binary format. This change has allowed for the base abstract classes to move away from object based APIs to one based on Generics. This change hopefully greatly simplifies the creation of .Net MapReduce jobs.

As always to submit MapReduce jobs one can use the following command line syntax:

MSDN.Hadoop.Submission.Console.exe -input "mobile/data/debug/sampledata.txt" -output "mobile/querytimes/debug"

-mapper "MSDN.Hadoop.MapReduceFSharp.MobilePhoneQueryMapper, MSDN.Hadoop.MapReduceFSharp"

-reducer "MSDN.Hadoop.MapReduceFSharp.MobilePhoneQueryReducer, MSDN.Hadoop.MapReduceFSharp"

-file "%HOMEPATH%\MSDN.Hadoop.MapReduce\Release\MSDN.Hadoop.MapReduceFSharp.dll"

The mapper and reducer parameters are .Net types that derive from a base Map and Reduce abstract classes shown below. The input, output, and files options are analogous to the standard Hadoop streaming submissions. The mapper and reducer options (more on a combiner option later) allow one to define a .Net type derived from the appropriate abstract base classes. Under the covers standard Hadoop Streaming is being used, where controlling executables are used to handle the StdIn and StdOut operations and activating the required .Net types. The “file” parameter is required to specify the DLL for the .Net type to be loaded at runtime, in addition to any other required files.

As always the source can be downloaded from:

https://code.msdn.microsoft.com/Framework-for-Composing-af656ef7

Mapper and Reducer Base Classes

The following definitions outline the abstract base classes from which one needs to derive. Lets start with the C# definitions:

C# Abstract Classes

- namespace MSDN.Hadoop.MapReduceBase

- {

- [Serializable]

- [AbstractClass]

- public abstract class MapReduceBase<V2>

- {

- protected MapReduceBase();

- public override IEnumerable<Tuple<string, V2>> Cleanup();

- public override void Setup();

- }

- [Serializable]

- [AbstractClass]

- public abstract class MapperBaseText<V2> : MapReduceBase<V2>

- {

- protected MapperBaseText();

- public abstract override IEnumerable<Tuple<string, V2>> Map(string value);

- }

- [Serializable]

- [AbstractClass]

- public abstract class MapperBaseXml<V2> : MapReduceBase<V2>

- {

- protected MapperBaseXml();

- public abstract override IEnumerable<Tuple<string, V2>> Map(XElement element);

- }

- [Serializable]

- [AbstractClass]

- public abstract class MapperBaseBinary<V2> : MapReduceBase<V2>

- {

- protected MapperBaseBinary();

- public abstract override IEnumerable<Tuple<string, V2>> Map(string filename, Stream document);

- }

- [Serializable]

- [AbstractClass]

- public abstract class CombinerBase<V2> : MapReduceBase<V2>

- {

- protected CombinerBase();

- public abstract override IEnumerable<Tuple<string, V2>> Combine(string key, IEnumerable<V2> values);

- }

- [Serializable]

- [AbstractClass]

- public abstract class ReducerBase<V2, V3> : MapReduceBase<V2>

- {

- protected ReducerBase();

- public abstract override IEnumerable<Tuple<string, V3>> Reduce(string key, IEnumerable<V2> values);

- }

- }

The equivalent F# definitions are:

F# Abstract Classes

- namespace MSDN.Hadoop.MapReduceBase

- [<AbstractClass>]

- type MapReduceBase<'V2>() =

- abstract member Setup: unit -> unit

- default this.Setup() = ()

- abstract member Cleanup: unit -> IEnumerable<string * 'V2>

- default this.Cleanup() = Seq.empty

- [<AbstractClass>]

- type MapperBaseText<'V2>() =

- inherit MapReduceBase<'V2>()

- abstract member Map: value:string -> IEnumerable<string * 'V2>

- [<AbstractClass>]

- type MapperBaseXml<'V2>() =

- inherit MapReduceBase<'V2>()

- abstract member Map: element:XElement -> IEnumerable<string * 'V2>

- [<AbstractClass>]

- type MapperBaseBinary<'V2>() =

- inherit MapReduceBase<'V2>()

- abstract member Map: filename:string -> document:Stream -> IEnumerable<string * 'V2>

- [<AbstractClass>]

- type CombinerBase<'V2>() =

- inherit MapReduceBase<'V2>()

- abstract member Combine: key:string -> values:IEnumerable<'V2> -> IEnumerable<string * 'V2>

- [<AbstractClass>]

- type ReducerBase<'V2, 'V3>() =

- inherit MapReduceBase<'V2>()

- abstract member Reduce: key:string -> values:IEnumerable<'V2> -> IEnumerable<string * 'V3>

The objective in defining these base classes was to not only support creating .Net Mapper and Reducers but also to provide a means for Setup and Cleanup operations to support In-Place Mapper/Combiner/Reducer optimizations, utilize IEnumerable and sequences for publishing data from all classes, and finally provide a simple submission mechanism analogous to submitting Java based jobs.

The usage of the Generic types V2 and V3 equate to the names used in the Java definitions. The current type of the input into the Mapper is a string (this normally being V1). This is needed as the mapper, in Streaming jobs, performs the projection from the textual input.

For each class a Setup function is provided to allow one to perform tasks related to the instantiation of the class. The Mapper’s Map and Cleanup functions return an IEnumerable consisting of tuples with a Key/Value pair. It is these tuples that represent the mappers output. The returned types are written to file using binary serialization.

The Combiner and Reducer takes in an IEnumerable, for each key, and reduces this into a key/value enumerable. Once again the Cleanup allows for return values, to allow for In-Reducer optimizations.

Binary and XML Processing and Multiple Keys

As one can see from the abstract class definitions the framework also provides support for submitting jobs that support Binary and XML based Mappers. To support using Mappers derived from these types a “format” submission parameter is required. Supported values being Text, Binary, and XML; the default value being “Text”.

To submit a binary streaming job one just has to use a Mapper derived from the MapperBaseBinary abstract class and use the binary format specification:

-format Binary

In this case the input into the Mapper will be a Stream object that represents a complete binary document instance.

To submit an XML streaming job one just has to use a Mapper derived from the MapperBaseXml abstract class and use the XML format specification, along with a node to be processed within the XML documents:

-format XML –nodename Node

In this case the input into the Mapper will be an XElement node derived from the XML document based on the nodename parameter.

Using multiple keys from the Mapper is a two-step process. Firstly the Mapper needs to be modified to output a string based key in the correct format. This is done by passing the set of string key values into the Utilities.FormatKeys() function. This concatenates the keys using the necessary tab character. Secondly, the job has to be submitted specifying the expected number of keys:

MSDN.Hadoop.Submission.Console.exe -input "stores/demographics" -output "stores/banking"

-mapper "MSDN.Hadoop.MapReduceFSharp.StoreXmlElementMapper, MSDN.Hadoop.MapReduceFSharp"

-reducer "MSDN.Hadoop.MapReduceFSharp.StoreXmlElementReducer, MSDN.Hadoop.MapReduceFSharp"

-file "%HOMEPATH%\Projects\MSDN.Hadoop.MapReduce\Release\MSDN.Hadoop.MapReduceFSharp.dll"

-nodename Store -format Xml -numberKeys 2

This parameter equates to the necessary Hadoop job configuration parameter.

Samples

To demonstrate the submission framework, here are some sample Mappers and Reducers with the corresponding command line submissions:

C# Mobile Phone Range (with In-Mapper optimization)

Calculates the mobile phone query time range for a device with an In-Mapper optimization yielding just the Min and Max values:

- using System;

- using System.Collections.Generic;

- using System.Linq;

- using System.Text;

- using MSDN.Hadoop.MapReduceBase;

- namespace MSDN.Hadoop.MapReduceCSharp

- {

- public class MobilePhoneRangeMapper : MapperBaseText<TimeSpan>

- {

- private Dictionary<string, Tuple<TimeSpan, TimeSpan>> ranges;

- private Tuple<string, TimeSpan> GetLineValue(string value)

- {

- try

- {

- string[] splits = value.Split('\t');

- string devicePlatform = splits[3];

- TimeSpan queryTime = TimeSpan.Parse(splits[1]);

- return new Tuple<string, TimeSpan>(devicePlatform, queryTime);

- }

- catch (Exception)

- {

- return null;

- }

- }

- public override void Setup()

- {

- this.ranges = new Dictionary<string, Tuple<TimeSpan, TimeSpan>>();

- }

- public override IEnumerable<Tuple<string, TimeSpan>> Map(string value)

- {

- var range = GetLineValue(value);

- if (range != null)

- {

- if (ranges.ContainsKey(range.Item1))

- {

- var original = ranges[range.Item1];

- if (range.Item2 < original.Item1)

- {

- // Update Min amount

- ranges[range.Item1] = new Tuple<TimeSpan, TimeSpan>(range.Item2, original.Item2);

- }

- if (range.Item2 > original.Item2)

- {

- //Update Max amount

- ranges[range.Item1] = new Tuple<TimeSpan, TimeSpan>(original.Item1, range.Item2);

- }

- }

- else

- {

- ranges.Add(range.Item1, new Tuple<TimeSpan, TimeSpan>(range.Item2, range.Item2));

- }

- }

- return Enumerable.Empty<Tuple<string, TimeSpan>>();

- }

- public override IEnumerable<Tuple<string, TimeSpan>> Cleanup()

- {

- foreach (var range in ranges)

- {

- yield return new Tuple<string, TimeSpan>(range.Key, range.Value.Item1);

- yield return new Tuple<string, TimeSpan>(range.Key, range.Value.Item2);

- }

- }

- }

- public class MobilePhoneRangeReducer : ReducerBase<TimeSpan, Tuple<TimeSpan, TimeSpan>>

- {

- public override IEnumerable<Tuple<string, Tuple<TimeSpan, TimeSpan>>> Reduce(string key, IEnumerable<TimeSpan> value)

- {

- var baseRange = new Tuple<TimeSpan, TimeSpan>(TimeSpan.MaxValue, TimeSpan.MinValue);

- var rangeValue = value.Aggregate(baseRange, (accSpan, timespan) =>

- new Tuple<TimeSpan, TimeSpan>((timespan < accSpan.Item1) ? timespan : accSpan.Item1, (timespan > accSpan.Item2) ? timespan : accSpan.Item2));

- yield return new Tuple<string, Tuple<TimeSpan, TimeSpan>>(key, rangeValue);

- }

- }

- }

MSDN.Hadoop.Submission.Console.exe -input "mobilecsharp/data" -output "mobilecsharp/querytimes"

-mapper "MSDN.Hadoop.MapReduceCSharp.MobilePhoneRangeMapper, MSDN.Hadoop.MapReduceCSharp"

-reducer "MSDN.Hadoop.MapReduceCSharp.MobilePhoneRangeReducer, MSDN.Hadoop.MapReduceCSharp"

-file "%HOMEPATH%\MSDN.Hadoop.MapReduceCSharp\Release\MSDN.Hadoop.MapReduceCSharp.dll"

C# Mobile Min (with Mapper, Combiner, Reducer)

Calculates the mobile phone minimum time for a device with a combiner yielding just the Min value:

- using System;

- using System.Collections.Generic;

- using System.Linq;

- using System.Text;

- using MSDN.Hadoop.MapReduceBase;

- namespace MSDN.Hadoop.MapReduceCSharp

- {

- public class MobilePhoneMinMapper : MapperBaseText<TimeSpan>

- {

- private Tuple<string, TimeSpan> GetLineValue(string value)

- {

- try

- {

- string[] splits = value.Split('\t');

- string devicePlatform = splits[3];

- TimeSpan queryTime = TimeSpan.Parse(splits[1]);

- return new Tuple<string, TimeSpan>(devicePlatform, queryTime);

- }

- catch (Exception)

- {

- return null;

- }

- }

- public override IEnumerable<Tuple<string, TimeSpan>> Map(string value)

- {

- var returnVal = GetLineValue(value);

- if (returnVal != null) yield return returnVal;

- }

- }

- public class MobilePhoneMinCombiner : CombinerBase<TimeSpan>

- {

- public override IEnumerable<Tuple<string, TimeSpan>> Combine(string key, IEnumerable<TimeSpan> value)

- {

- yield return new Tuple<string, TimeSpan>(key, value.Min());

- }

- }

- public class MobilePhoneMinReducer : ReducerBase<TimeSpan, TimeSpan>

- {

- public override IEnumerable<Tuple<string, TimeSpan>> Reduce(string key, IEnumerable<TimeSpan> value)

- {

- yield return new Tuple<string, TimeSpan>(key, value.Min());

- }

- }

- }

MSDN.Hadoop.Submission.Console.exe -input "mobilecsharp/data" -output "mobilecsharp/querytimes"

-mapper "MSDN.Hadoop.MapReduceCSharp.MobilePhoneMinMapper, MSDN.Hadoop.MapReduceCSharp"

-reducer "MSDN.Hadoop.MapReduceCSharp.MobilePhoneMinReducer, MSDN.Hadoop.MapReduceCSharp"

-combiner "MSDN.Hadoop.MapReduceCSharp.MobilePhoneMinCombiner, MSDN.Hadoop.MapReduceCSharp"

-file "%HOMEPATH%\MSDN.Hadoop.MapReduceCSharp\Release\MSDN.Hadoop.MapReduceCSharp.dll"

F# Mobile Phone Query

Calculates the mobile phone range and average time for a device:

- namespace MSDN.Hadoop.MapReduceFSharp

- open System

- open MSDN.Hadoop.MapReduceBase

- type MobilePhoneQueryMapper() =

- inherit MapperBaseText<TimeSpan>()

- // Performs the split into key/value

- let splitInput (value:string) =

- try

- let splits = value.Split('\t')

- let devicePlatform = splits.[3]

- let queryTime = TimeSpan.Parse(splits.[1])

- Some(devicePlatform, queryTime)

- with

- | :? System.ArgumentException -> None

- // Map the data from input name/value to output name/value

- override self.Map (value:string) =

- seq {

- let result = splitInput value

- if result.IsSome then

- yield result.Value

- }

- type MobilePhoneQueryReducer() =

- inherit ReducerBase<TimeSpan, (TimeSpan*TimeSpan*TimeSpan)>()

- override self.Reduce (key:string) (values:seq<TimeSpan>) =

- let initState = (TimeSpan.MaxValue, TimeSpan.MinValue, 0L, 0L)

- let (minValue, maxValue, totalValue, totalCount) =

- values |>

- Seq.fold (fun (minValue, maxValue, totalValue, totalCount) value ->

- (min minValue value, max maxValue value, totalValue + (int64)(value.TotalSeconds), totalCount + 1L) ) initState

- Seq.singleton (key, (minValue, TimeSpan.FromSeconds((float)(totalValue/totalCount)), maxValue))

MSDN.Hadoop.Submission.Console.exe -input "mobile/data" -output "mobile/querytimes"

-mapper "MSDN.Hadoop.MapReduceFSharp.MobilePhoneQueryMapper, MSDN.Hadoop.MapReduceFSharp"

-reducer "MSDN.Hadoop.MapReduceFSharp.MobilePhoneQueryReducer, MSDN.Hadoop.MapReduceFSharp"

-file "%HOMEPATH%\MSDN.Hadoop.MapReduceFSharp\Release\MSDN.Hadoop.MapReduceFSharp.dll"

F# Store XML (XML in Samples)

Calculates the total revenue, within the store XML, based on demographic data; also demonstrating multiple keys:

- namespace MSDN.Hadoop.MapReduceFSharp

- open System

- open System.Collections.Generic

- open System.Linq

- open System.IO

- open System.Text

- open System.Xml

- open System.Xml.Linq

- open MSDN.Hadoop.MapReduceBase

- type StoreXmlElementMapper() =

- inherit MapperBaseXml<decimal>()

- override self.Map (element:XElement) =

- let aw = "https://schemas.microsoft.com/sqlserver/2004/07/adventure-works/StoreSurvey"

- let demographics = element.Element(XName.Get("Demographics")).Element(XName.Get("StoreSurvey", aw))

- seq {

- if not(demographics = null) then

- let business = demographics.Element(XName.Get("BusinessType", aw)).Value

- let bank = demographics.Element(XName.Get("BankName", aw)).Value

- let key = Utilities.FormatKeys(business, bank)

- let sales = Decimal.Parse(demographics.Element(XName.Get("AnnualSales", aw)).Value)

- yield (key, sales)

- }

- type StoreXmlElementReducer() =

- inherit ReducerBase<decimal, int>()

- override self.Reduce (key:string) (values:seq<decimal>) =

- let totalRevenue =

- values |> Seq.sum

- Seq.singleton (key, int totalRevenue)

MSDN.Hadoop.Submission.Console.exe -input "stores/demographics" -output "stores/banking"

-mapper "MSDN.Hadoop.MapReduceFSharp.StoreXmlElementMapper, MSDN.Hadoop.MapReduceFSharp"

-reducer "MSDN.Hadoop.MapReduceFSharp.StoreXmlElementReducer, MSDN.Hadoop.MapReduceFSharp"

-file "%HOMEPATH%\MSDN.Hadoop.MapReduceFSharp\bin\Release\MSDN.Hadoop.MapReduceFSharp.dll"

-nodename Store -format Xml

F# Binary Document (Word and PDF Documents)

Calculates the pages per author for a combination of Office Word and PDF documents:

- namespace MSDN.Hadoop.MapReduceFSharp

- open System

- open System.Collections.Generic

- open System.Linq

- open System.IO

- open System.Text

- open System.Xml

- open System.Xml.Linq

- open DocumentFormat.OpenXml

- open DocumentFormat.OpenXml.Packaging

- open DocumentFormat.OpenXml.Wordprocessing

- open iTextSharp.text

- open iTextSharp.text.pdf

- open MSDN.Hadoop.MapReduceBase

- type OfficePageMapper() =

- inherit MapperBaseBinary<int>()

- let (|WordDocument|PdfDocument|UnsupportedDocument|) extension =

- if String.Equals(extension, ".docx", StringComparison.InvariantCultureIgnoreCase) then

- WordDocument

- else if String.Equals(extension, ".pdf", StringComparison.InvariantCultureIgnoreCase) then

- PdfDocument

- else

- UnsupportedDocument

- let dc = XNamespace.Get("https://purl.org/dc/elements/1.1/")

- let cp = XNamespace.Get("https://schemas.openxmlformats.org/package/2006/metadata/core-properties")

- let unknownAuthor = "unknown author"

- let authorKey = "Author"

- let getAuthorsWord (document:WordprocessingDocument) =

- let coreFilePropertiesXDoc = XElement.Load(document.CoreFilePropertiesPart.GetStream())

- // Take the first dc:creator element and split based on a ";"

- let creators = coreFilePropertiesXDoc.Elements(dc + "creator")

- if Seq.isEmpty creators then

- [| unknownAuthor |]

- else

- let creator = (Seq.head creators).Value

- if String.IsNullOrWhiteSpace(creator) then

- [| unknownAuthor |]

- else

- creator.Split(';')

- let getPagesWord (document:WordprocessingDocument) =

- // return page count

- Int32.Parse(document.ExtendedFilePropertiesPart.Properties.Pages.Text)

- let getAuthorsPdf (document:PdfReader) =

- // For PDF documents perform the split on a ","

- if document.Info.ContainsKey(authorKey) then

- let creators = document.Info.[authorKey]

- if String.IsNullOrWhiteSpace(creators) then

- [| unknownAuthor |]

- else

- creators.Split(',')

- else

- [| unknownAuthor |]

- let getPagesPdf (document:PdfReader) =

- // return page count

- document.NumberOfPages

- // Map the data from input name/value to output name/value

- override self.Map (filename:string) (document:Stream) =

- let result =

- match Path.GetExtension(filename) with

- | WordDocument ->

- // Get access to the word processing document from the input stream

- use document = WordprocessingDocument.Open(document, false)

- // Process the word document with the mapper

- let pages = getPagesWord document

- let authors = (getAuthorsWord document)

- // close document

- document.Close()

- Some(pages, authors)

- | PdfDocument ->

- // Get access to the pdf processing document from the input stream

- let document = new PdfReader(document)

- // Process the pdf document with the mapper

- let pages = getPagesPdf document

- let authors = (getAuthorsPdf document)

- // close document

- document.Close()

- Some(pages, authors)

- | UnsupportedDocument ->

- None

- if result.IsSome then

- snd result.Value

- |> Seq.map (fun author -> (author, fst result.Value))

- else

- Seq.empty

- type OfficePageReducer() =

- inherit ReducerBase<int, int>()

- override self.Reduce (key:string) (values:seq<int>) =

- let totalPages =

- values |> Seq.sum

- Seq.singleton (key, totalPages)

MSDN.Hadoop.Submission.Console.exe -input "office/documents" -output "office/authors"

-mapper "MSDN.Hadoop.MapReduceFSharp.OfficePageMapper, MSDN.Hadoop.MapReduceFSharp"

-reducer "MSDN.Hadoop.MapReduceFSharp.OfficePageReducer, MSDN.Hadoop.MapReduceFSharp"

-combiner "MSDN.Hadoop.MapReduceFSharp.OfficePageReducer, MSDN.Hadoop.MapReduceFSharp"

-file "%HOMEPATH%\MSDN.Hadoop.MapReduceFSharp\bin\Release\MSDN.Hadoop.MapReduceFSharp.dll"

-file "C:\Reference Assemblies\itextsharp.dll" -format Binary

Optional Parameters

To support some additional Hadoop Streaming options a few optional parameters are supported.

-numberReducers X

As expected this specifies the maximum number of reducers to use.

-debug

The option turns on verbose mode and specifies a job configuration to keep failed task outputs.

To view the the supported options one can use a help parameters, displaying:

Command Arguments:

-input (Required=true) : Input Directory or Files

-output (Required=true) : Output Directory

-mapper (Required=true) : Mapper Class

-reducer (Required=true) : Reducer Class

-combiner (Required=false) : Combiner Class (Optional)

-format (Required=false) : Input Format |Text(Default)|Binary|Xml|

-numberReducers (Required=false) : Number of Reduce Tasks (Optional)

-numberKeys (Required=false) : Number of MapReduce Keys (Optional)

-file (Required=true) : Processing Files (Must include Map and Reduce Class files)

-nodename (Required=false) : XML Processing Nodename (Optional)

-debug (Required=false) : Turns on Debugging Options

UI Submission

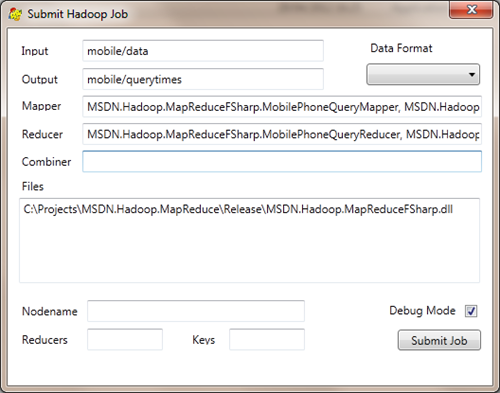

The provided submission framework works from a command-line. However there is nothing to stop one submitting the job using a UI; albeit a command console is opened. To this end I have put together a simple UI that supports submitting Hadoop jobs.

This simple UI supports all the necessary options for submitting jobs.

Code Download

As mentioned the actual Executables and Source code can be downloaded from:

https://code.msdn.microsoft.com/Framework-for-Composing-af656ef7

The source includes, not only the .Net submission framework, but also all necessary Java classes for supporting the Binary and XML job submissions. This relies on a custom Streaming JAR which should be copied to the Hadoop lib directory, there are two versions of the Streaming jar; one for running in azure and one for when running local. The difference is that they have been compiled with different versions of the Java compiler. Just remember to use the appropriate version (dropping the –local and –azure prefixes) when copying to your Hadoop lib folder.

To use the code one just needs to reference the EXE’s in the Release directory. This folder also contains the MSDN.Hadoop.MapReduceBase.dll that contains the abstract base class definitions.

Moving Forward

In a separate post I will cover what is actually happening under the covers.

As always if you find the code useful and/or use this for your MapReduce jobs, or just have some comments, please do let me know.

Comments

- Anonymous

May 03, 2012

Why use this or even write hadoop jobs with java when there is pig. More than %80 of hadoop jobs are being run with pig scripts in large corps. - Anonymous

May 03, 2012

I do like Pig, but not everything that can be done with MapReduce can be achieved with Pig. - Anonymous

May 23, 2012

I wanted to use the dll for passing a job to hadoop.But how will I pass the credentials..login and password for hadoop.like how will the job in my account?? - Anonymous

May 23, 2012

For .eg before i used to login to hadooponazure and create a job using C# mapper and reducer.But now I can pass the mapper and reducer class but how will I pass the credential for my hadooponazure account? - Anonymous

May 24, 2012

Currently I have not done anything around hooking up a RunAs for the command options, but this would be simple enough.Currently it will run under the account the submission job runs under. - Anonymous

May 29, 2012

Hi Carl,Along with multiple mapper, can we expect support of multiple combiner in the first release. - Anonymous

May 31, 2012

At the moment one can only specify the number of reducers. - Anonymous

June 04, 2012

Thanks Carl for the info.