Tasks and Continuations: Available for Download Today!

The most difficult problem that a scheduler or a thread pool has to solve is figuring out how many running threads to have at any given moment. Create too many threads and the CPUs are oversubscribed. Create too few and the CPUs are underutilized. The straightforward setup – one thread per core – doesn’t work when threads block. During an uncooperative blocking operation, such as a call to Sleep or WaitForSingleObject, a thread is not using the CPU but is still holding on to other operating system resources, such as the stack. So the scheduler has to detect a blocking operation and quickly spin up a new thread – but not too quickly or else thread explosion ensues. This is a very hard problem to solve.

Blocking is undesirable in other situations as well. A blocked GUI thread cannot service the message loop – meaning that the application becomes unresponsive and the user sees a “spinning doughnut”.

So what’s a programmer to do?

Typically, we use a blocking synchronization when the results of the pending operation are needed before the next operation can proceed. In effect, we’re saying to the thread: “I’ll be here waiting for you to finish, and then I’ll tell you what to do next”.

What if instead of waiting, we could say “when you’re done with this task, continue with that one, and in the meantime I’ll go away to do some other work”. Doing other work can mean processing the message loop, or scheduling other tasks – which naturally can run in parallel with each other.

This is exactly what PPL tasks and continuations, available for download today from the ConcRT Sample Pack, aim to accomplish.

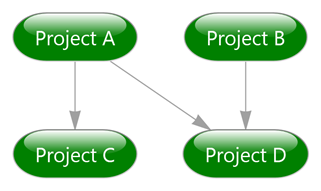

Imagine that you’re implementing a build tool such as make or msbuild, which builds the projects in the dependency order. Your configuration contains projects A, B, C and D, where project C depends on project A, and project D depends on both projects A and B:

This is how you do it (you will find the full example in the sample pack):

1: Project A = ...

2: Project B = ...

3: Project C = ...

4: Project D = ...

5:

6: task<void> a([=]() {

7: Build(A);

8: });

9:

10: task<void> b([=]() {

11: Build(B);

12: });

13:

14: // Build C after A

15: auto c = a.then([=]() {

16: Build(C);

17: });

18:

19: // Build D after both A and B

20: auto d = (a && b).then([=]() {

21: Build(D);

22: });

23:

24: c.wait();

25: d.wait();

First, you create an instance of a task a that takes a C++ lambda as a parameter in the constructor. The task starts running as soon as it is created – or, more precisely, as soon as the underlying scheduler finds an available hardware thread that can execute it.

The Build function returns void, and hence the type of the task is void. A more advanced implementation would probably return an error code, which would be the type of the task. This is how it could be done:

1: task<int> a([=]() {

2: return Build(A);

3: });

Next, you create a task b, which is also scheduled to start immediately, and begins building the project B.

Notice that the task b didn’t need to wait for the task a to finish, nor is it guaranteed to run in parallel with a. You did not specify any dependencies between the two tasks, leaving it up to the underlying Concurrency Runtime to schedule them as it sees fit – and it will schedule the tasks in parallel if enough CPU resources are available.

Next, you create a new task, c, which is a continuation of task a. The then is a function that creates a new task. The parameter of the then is a lambda, and the parameter of the lambda is the return value of the antecedent task.

In our case, this type is void, but if we were to define the task a with type int, the call to then could look like this:

1: auto c = a.then([=](int errorcode) -> int {

2: if( errorcode == 0)

3: {

4: // Build C only if A succeeded

5: return Build(C);

6: }

7: else

8: {

9: return errorcode;

10: }

11: });

The next step is to schedule the build of the project D. The operator && creates a task that joins together multiple tasks of the same type. The task returned by the join is somewhat unusual, in that it has no action. It does one thing and one thing only – join multiple tasks. Like all tasks, it can be waited on, or continued – which is how you’d typically use it.

Finally, we’re waiting for the last two tasks – c and d – to finish by explicitly waiting on them. This is a usual blocking wait, which would be undesirable in a GUI application but is perfectly fine in a command-line program.

How would you do this in a GUI application? You already know how:

1: (c && d).then([] () {

2: ReportResults();

3: });

4:

5: // now if you excuse me, I have messages to pump

6: return;

Those of you keeping up with the news from our friends in the PFX team must be very well familiar with the concept of continuations. We have borrowed many ideas from the managed world, but tried hard to make the programming model feel natural for the C++ developer, and preserve the overall “look and feel” of the PPL API. Thus, you can seamlessly use tasks and continuations together with existing and new parallel algorithms, such as parallel_for and parallel_sort.

The functionality in the sample pack has a few limitations compared to the version that we eventually plan to integrate into the product, mainly because we wanted to enable a header-only release without having to ask early adopters to install a whole new CRT, which involves copying of several lib and dll files. We tried to keep these limitations to a minimum, so you can still play with the code and give us feedback.

You’ll find several samples that demonstrate uses of PPL tasks, including a few concepts that were not covered in this post. In my next installment, I’ll explain these concepts and show a few more advanced samples.

Please download the sample pack here and send us your feedback. Specifically, we’re interested in the following:

Do you find the concept of tasks and continuations easy to understand? Do you find it useful? Would you use tasks and continuations in your C++ projects? What did we get right, and what did we get wrong? What’s missing and what is superfluous?

We’re eagerly awaiting your feedback.

To be continued…

Artur Laksberg

Concurrency Runtime Team

Comments

Anonymous

March 09, 2011

The comment has been removedAnonymous

March 09, 2011

Petke -- thanks for the feedback! I agree the && and || operators are somewhat controversial -- succinct but cryptic at the same time. We do have the when_all construct, which takes two iterators. So while you cannot do this: when_all(a,b) you can do task<T> tasks[] = {a,b}; when_all(&tasks[0], &tasks[2]); Artur LaksbergAnonymous

March 09, 2011

Another question that just came to me. How does this relate to the c++0x futures, promises, packaged_task etc? Is these basically rival libraries? Thanks.Anonymous

March 10, 2011

Petke, Another great question! PPL Tasks enable wait-free composition of asynchronous operations. This is their main differentiator. We worked hard to integrate PPL tasks seamlessly with the existing PPL constructs. Cancellation is one example – you can have a parallel_for inside a task, and cancelling the task will cancel the parallel_for. We also work on integrating C++0x futures with ConcRT. We want both tasks and C++0x futures to use the same ConcRT scheduler under the covers, so they would not compete for hardware resources in the same application. We’re also considering whether we should integrate them even further – for example, we can provide a task constructor that takes a future as a parameter. Another idea is to do away with the task_completion_event and use the std::promise instead – thus reducing the concept count from two new classes to just one (plus a handful of new global functions). What we do depends on the product schedule and your feedback -- so keep it coming.Anonymous

March 10, 2011

The comment has been removedAnonymous

March 10, 2011

Also, the code does not compile if _HAS_EXCEPTIONS=0 is defined. Also, in response to my previous question, I realized that tasks are copyable with no problem, so it appears to be safe to store the return values of continue_with(), etc by value in containers, for example.Anonymous

March 11, 2011

Zach, We resisted adding the run method because we think most people will want their tasks to start right away, without having to remember to call the run method. We also wanted to keep the API set as small as possible. One less method for you to learn and for us to explain is a good thing. Other than that, if we were to introduce the run method, the things we would have to solve are:

- What happens if run on the same task is called twice on different threads? Is the second call a no-op or should it throw an exception?

- Should you call run on tasks created with continue_with? Whan_all/when_any?

- Should we also introduce the is_running method? Clearly, this method would be inherently unsafe, because another thread can call run at the same time (or a nanosecond later)

- What happens if you wait on a task that hasn’t started? Throw an exception, hang, or start the task automatically? What you describe as a solution (roots of the hierarchy waiting on an event handle) is OK, but you can do it more efficiently – without burning a thread – using the task_completion_event. Here is how: task_completion_event<void> tce_start; task<void> t_start(tce_start); auto id1 = t_start.continue_with({ … }); // build up your hierarchy here… // now start the whole graph: tce_start.set(); That said, nothing is set in stone yet. If my sample above is not convincing, let me know why. What do other people think – do we need the run method on tasks? Speak up if you have an opinion! PS. Thanks for the bug report with _HAS_EXCEPTIONS, we’ll look into it.

Anonymous

March 11, 2011

The comment has been removedAnonymous

March 11, 2011

Zach, thank you for your feedback and questions. I have tried to address them below. a) _HAS_EXCEPTIONS is unsupported in PPL & STL in VS2010. However, you may be able to work around this issue by re-defining _HAS_EXCEPTIONS after you have finished including all the headers (where this condition would only be effective in user-code). b) Regarding your scenario, where you want HighLevelTask1 to continue irrespective of HighLevelTask0 running to completion or canceled (error) state; there are 2 approaches I would like to discuss here: Approach 1: You can schedule a task that cooperatively waits for highLevelTask0 to complete and irrespective of the status (completed or canceled), schedule highLevelTask1. This would effectively address your problem, the downside to this approach begin highLevelTask1 burns a thread when it is scheduled (but this side-effect is reduced to some degree with the blocking being cooperative) task<void> highLevelTask0(HighLevelTaskFunc); task<void> highLevelTask1(& { //Doesnt matter if highLevelTask0 completes or cancels, just wait for terminal state highLevelTask0.wait(); //Schedule highLevelTask1 and wait task<void>(HighLevelTaskFunc).wait(); }); Approach 2: Alternatively, you could schedule highLevelTaskCanc1 to be scheduled iff highLevelTask0 cancels and highLevelTask1 to be scheduled iff highLevelTask0 runs to completetion (note, you would have to explicitly cancel the task highLevelTaskCanc1 in this case). The plus side is that this does not burn a thread in contrast to Approach 1, however, please be advised that the _Continue_on_cancel() is an uglified api used internally (in when_any and when_all), has not been tested and may not work for all cases task<void> highLevelTask0(HighLevelTaskFunc); //Schedule a continuation on highLevelTask0 if it is canceled auto highLevelTaskCanc1 = highLevelTask0._Continue_on_cancel(HighLevelTaskFunc); //Schedule a continuation on highLevelTask0 if it is not canceled //Cancel the continue_on_cancel task since highLevelTask0 completed successfully auto highLevelTask1 = highLevelTask0.continue_with(& { highLevelTaskCanc1.cancel(); HighLevelTaskFunc(); }); Do either of the approaches address your problem? If yes, which approach would you adopt? Thanks, Vinod.Anonymous

March 11, 2011

The comment has been removedAnonymous

March 15, 2011

The comment has been removedAnonymous

March 15, 2011

The comment has been removedAnonymous

March 16, 2011

Hi Zach, yes, you're correct in that the scenario you laid out you could simply have C continue from A. One of the reasons we released this sample pack is to hear from users like you to see what kinds of scenarios you would use this in, in order to better influence our design. Another issue is whether a continue_on_cancel() should return a task to the user, which you can use to create a brand new tree of work, where anything else could be canceled. It was also pointed out to me yesterday that if so, a user cannot safely wait on any task in this "cancellation" side path without the possibility of wait() blocking forever, which would be a undesirable. The code currently should simply discard the continue_on_cancel() task if the parent task is successful (i.e. exactly what you were expecting). Anyway, thanks for your input, we'll definitely keep it in mind!Anonymous

March 17, 2011

The comment has been removedAnonymous

March 27, 2011

The comment has been removedAnonymous

April 11, 2011

Looks great!

- Does this mean that once this is part of release, task_group style of starting tasks will be deprecated?

- Continuations are very elegant, but from the point of view of CPU utilization can we have the same effect as continuations by just using existing task_group and calling get, since get is a cooperative blocking? (BTW, is it also cooperative when called from non-worker thread, i.e. application thread? if yes. how is that possible that runtime if also managing non worker threads?). If yes, what this all has to do with uncooperative blocking as mentioned in beginning of the post?

Anonymous

April 11, 2011

I meant task_group.wait instead of .getAnonymous

April 14, 2011

The comment has been removedAnonymous

November 08, 2011

Could you please explain the difference between uncooperative blocking v.s. cooperative blocking, in terms of CPU resource allocation? Thanks.Anonymous

November 09, 2011

The comment has been removed